Kinect Calibration

Research.KinectCalibration History

Hide minor edits - Show changes to markup

NOTE: OpenNI and recent versions of Freenect generally do not need a custom calibration.

NOTE: version 0.5 and higher of RGBDemo do not require manual annotations.

Also check this webpage for more information on automatic calibration: Ros Kinect

Also check this webpage for more information on automatic calibration: Ros Kinect

P3D.z = depth(x,y)

P3D.z = depth(x_d,y_d)

(:title Kinect:)

(:title Kinect Calibration:)

Note that another transformation claimed to be more accurate was proposed there: [http://groups.google.com/group/openkinect/browse_thread/thread/31351846fd33c78/e98a94ac605b9f21?lnk=gst&q=stephane#e98a94ac605b9f21 Stephane Magnenat post] but I haven’t tested it yet.

Note that another transformation claimed to be more accurate was proposed there: Stephane Magnenat post but I haven’t tested it yet.

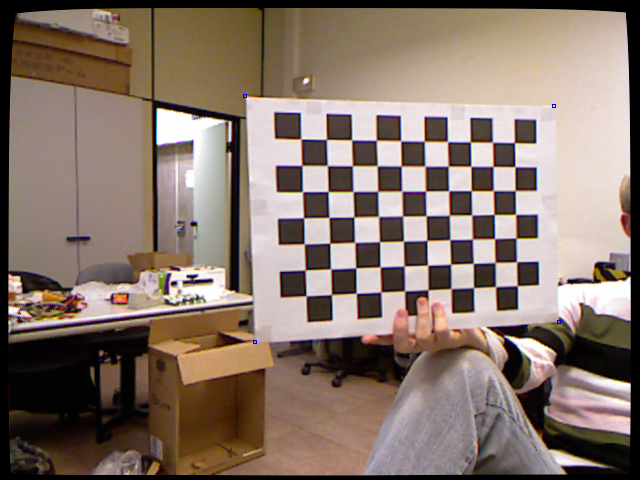

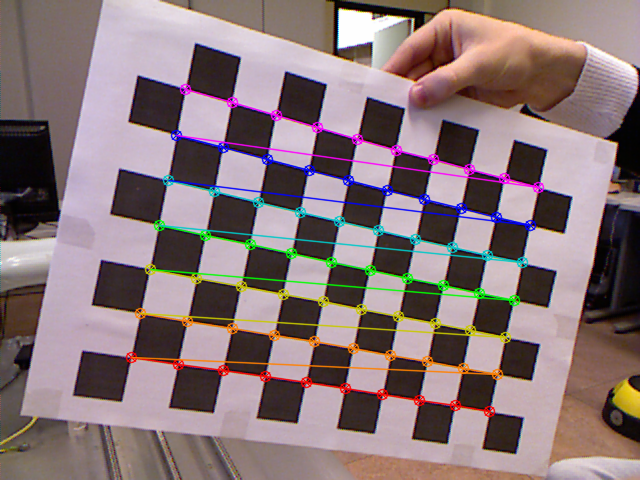

Stereo calibration

Selecting also the corners of the chessboard on the color images (they would also be easy to extract automatically using the chessboard recognition), standard stereo calibration can be performed.

Mapping depth pixels with color pixels

The first step is to undistort rgb and depth images using the estimated distortion coefficients. Then, using the depth camera intrinsics, each pixel (x_d,y_d) of the depth camera can be projected to metric 3D space using the following formula:

Transformation of raw depth values into meters

Raw depth values are integer between 0 and 2047. They can be transformed into depth in meters using the parameters given on the Ros Kinect page.

P3D.x = (x_d - cx_d) * depth(x_d,y_d) / fx_d P3D.y = (y_d - cy_d) * depth(x_d,y_d) / fy_d P3D.z = depth(x,y)

float raw_depth_to_meters(int raw_depth) {

if (raw_depth < 2047)

{

return 1.0 / (raw_depth * −0.0030711016 + 3.3309495161);

}

return 0;

}

with fx_d, fy_d, cx_d and cy_d the intrinsics of the depth camera.

We can then reproject each 3D point on the color image and get its color:

Note that another transformation claimed to be more accurate was proposed there: [http://groups.google.com/group/openkinect/browse_thread/thread/31351846fd33c78/e98a94ac605b9f21?lnk=gst&q=stephane#e98a94ac605b9f21 Stephane Magnenat post] but I haven’t tested it yet.

Stereo calibration

Selecting also the corners of the chessboard on the color images (they would also be easy to extract automatically using the chessboard recognition), standard stereo calibration can be performed.

Mapping depth pixels with color pixels

The first step is to undistort rgb and depth images using the estimated distortion coefficients. Then, using the depth camera intrinsics, each pixel (x_d,y_d) of the depth camera can be projected to metric 3D space using the following formula:

P3D’ = R.P3D + T P 2 D_rgb.x = (P3D’.x * fx_rgb / P3D’.z) + cx_rgb P 2 D_rgb.y = (P3D’.y * fy_rgb / P3D’.z) + cy_rgb

P3D.x = (x_d - cx_d) * depth(x_d,y_d) / fx_d P3D.y = (y_d - cy_d) * depth(x_d,y_d) / fy_d P3D.z = depth(x,y)

with R and T the rotation and translation parameters estimated during the stereo calibration.

The parameters I could estimate for my Kinect are:

- Color

with fx_d, fy_d, cx_d and cy_d the intrinsics of the depth camera.

We can then reproject each 3D point on the color image and get its color:

Also check this webpage for more information on automatic calibration: [http://www.ros.org/wiki/kinect_node|Ros Kinect]

Also check this webpage for more information on automatic calibration: Ros Kinect

Here is a preliminary semi-automatic way to calibrate the Kinect depth sensor and the rgb output to enable a mapping between them. You can see a result there:

Here is a preliminary semi-automatic way to calibrate the Kinect depth sensor and the rgb output to enable a mapping between them. You can see some results there:

Also check this webpage for more information on automatic calibration: [http://www.ros.org/wiki/kinect_node|Ros Kinect]

ROS calibration files

ROS calibration files

ROS calibration files

Here are some calibration files in ROS (http://code.ros.org) format. Attach:calibration_rgb.yaml Attach:calibration_depth.yaml

Here is a preliminary semi-automatic way to calibrate the Kinect depth sensor and the rgb output to enable a mapping between them. You can see a result there: (:youtube BVxKvZKKpds:)

Here is a preliminary semi-automatic way to calibrate the Kinect depth sensor and the rgb output to enable a mapping between them. You can see a result there: (:youtube BVxKvZKKpds:)

Here is a preliminary semi-automatic way to calibrate the Kinect depth sensor and the rgb output to enable a mapping between them.

Here is a preliminary semi-automatic way to calibrate the Kinect depth sensor and the rgb output to enable a mapping between them. You can see a result there: (:youtube BVxKvZKKpds:)

First step is to undistort rgb and depth images using the estimated distortion coefficients. Then, using the depth camera intrinsics, each pixel (x_d,y_d) of the depth camera can be projected to metric 3D space using the following formula:

The first step is to undistort rgb and depth images using the estimated distortion coefficients. Then, using the depth camera intrinsics, each pixel (x_d,y_d) of the depth camera can be projected to metric 3D space using the following formula:

@@

[@

@@

@]

@@

[@

@@

@]

[@

@]

[@

@]

[@

-1.0916736334336222e-02 ]

-1.0916736334336222e-02 ] @]

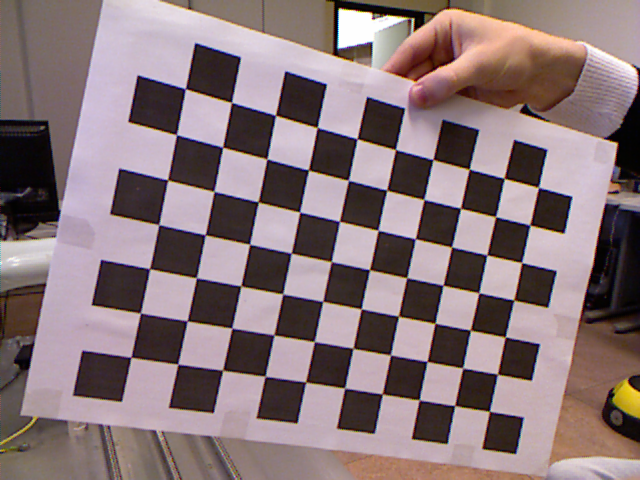

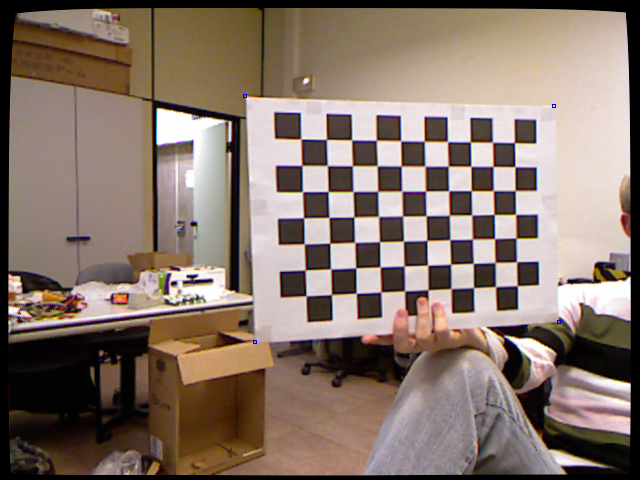

Calibration of the color camera intrinsics

The color camera intrinsics can be calibrated using standard chessboard recognition.

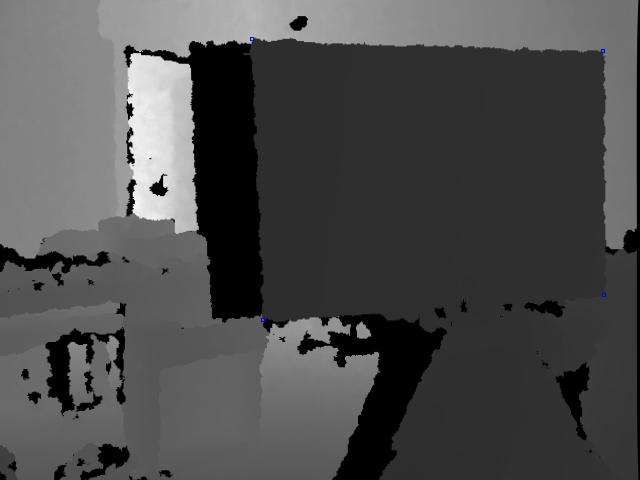

Calibration of the depth camera intrinsics

This is done by extracting the corners of the chessboard on the depth image and storing them. This could be done automatically, but right now I selected them by hand using a small utility program.

Stereo calibration

Selecting also the corners of the chessboard on the color images (they would also be easy to extract automatically using the chessboard recognition), standard stereo calibration can be performed.

Mapping depth pixels with color pixels

First step is to undistort rgb and depth images using the estimated distortion coefficients. Then, using the depth camera intrinsics, each pixel (x_d,y_d) of the depth camera can be projected to metric 3D space using the following formula:

@@ P3D.x = (x_d - cx_d) * depth(x_d,y_d) / fx_d P3D.y = (y_d - cy_d) * depth(x_d,y_d) / fy_d P3D.z = depth(x,y) @@

with fx_d, fy_d, cx_d and cy_d the intrinsics of the depth camera.

We can then reproject each 3D point on the color image and get its color:

@@ P3D’ = R.P3D + T P 2 D_rgb.x = (P3D’.x * fx_rgb / P3D’.z) + cx_rgb P 2 D_rgb.y = (P3D’.y * fy_rgb / P3D’.z) + cy_rgb @@

with R and T the rotation and translation parameters estimated during the stereo calibration.

The parameters I could estimate for my Kinect are:

- Color

fx_rgb 5.2921508098293293e+02 fy_rgb 5.2556393630057437e+02 cx_rgb 3.2894272028759258e+02 cy_rgb 2.6748068171871557e+02 k1_rgb 2.6451622333009589e-01 k2_rgb −8.3990749424620825e-01 p1_rgb −1.9922302173693159e-03 p2_rgb 1.4371995932897616e-03 k3_rgb 9.1192465078713847e-01

- Depth

fx_d 5.9421434211923247e+02 fy_d 5.9104053696870778e+02 cx_d 3.3930780975300314e+02 cy_d 2.4273913761751615e+02 k1_d −2.6386489753128833e-01 k2_d 9.9966832163729757e-01 p1_d −7.6275862143610667e-04 p2_d 5.0350940090814270e-03 k3_d −1.3053628089976321e+00

Relative transform between the sensors (in meters) R [ 9.9984628826577793e-01, 1.2635359098409581e-03, -1.7487233004436643e-02, −1.4779096108364480e-03, 9.9992385683542895e-01, −1.2251380107679535e-02, 1.7470421412464927e-02, 1.2275341476520762e-02, 9.9977202419716948e-01 ]

T [ 1.9985242312092553e-02, −7.4423738761617583e-04, -1.0916736334336222e-02 ]

Calibrating the depth and color camera

Here is a preliminary semi-automatic way to calibrate the Kinect depth sensor and the rgb output to enable a mapping between them.

It is basically a standard stereo calibration technique (http://opencv.willowgarage.com/documentation/cpp/camera_calibration_and_3d_reconstruction.html), the main difficulty comes from the depth image that cannot detect patterns on a flat surface. Thus, the pattern has to be created using depth difference.

Here I used a rectangular peace of carton cut around a chessboard printed on an A3 sheet of paper.